In order to understand better the concept of web scraping you are familiar with, we will tell you about web crawling and web crawlers.

WebSundew web scraping tool is a software product from a great family of web crawlers (though not in a pure form) and its application is more narrow as it is extracting data from some target web sites while a web crawler is a computer program which browses Internet automatically in order to get necessary information. There are other terms which are used for web crawler, you can come across such terms as web spiders, automatic indexers, bots or even web robots. All the terms can also be referred to WebSundew - data extraction software.The process which such computer programs perform is called web crawling or web spidering. Most web sites in World Wide Web, for example, search engines make use of crawling to provide some up-to-date data. Web crawlers are basically used to form a copy of all the web pages they visited or to collect specific information from web pages that can be e-mail addresses, for example.

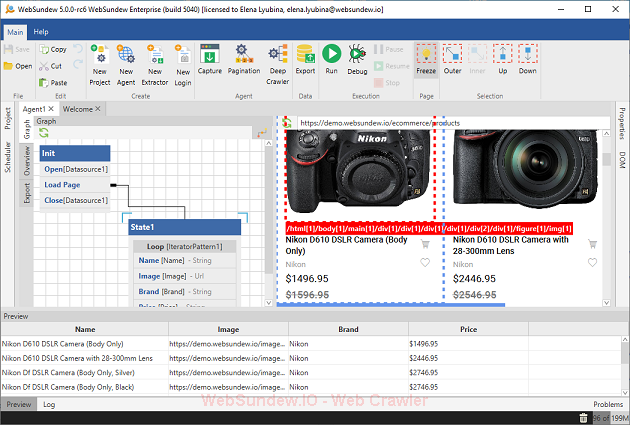

Some web crawlers, including WebSundew data extraction tool, were designed to extract as much data as possible from one particular web page. These crawlers are called path-ascending crawlers. They are also known as web harvesting computer programs as they usually gather all the web content.

Web crawlers can collect the necessary data much more quickly and in more depth than the humans. They can perform extraction process even faster than you are able to read or understand the web site content. It is needless to say that such web crawlers as WebSundew will simplify your work and help you effectively accomplish your tasks.